- Blog

- Step3-VL-10B: How a 10B Vision-Language Model Rivals Models 10-20x Larger

Step3-VL-10B: How a 10B Vision-Language Model Rivals Models 10-20x Larger

Step3-VL-10B: How a 10B Vision-Language Model Rivals Models 10-20x Larger

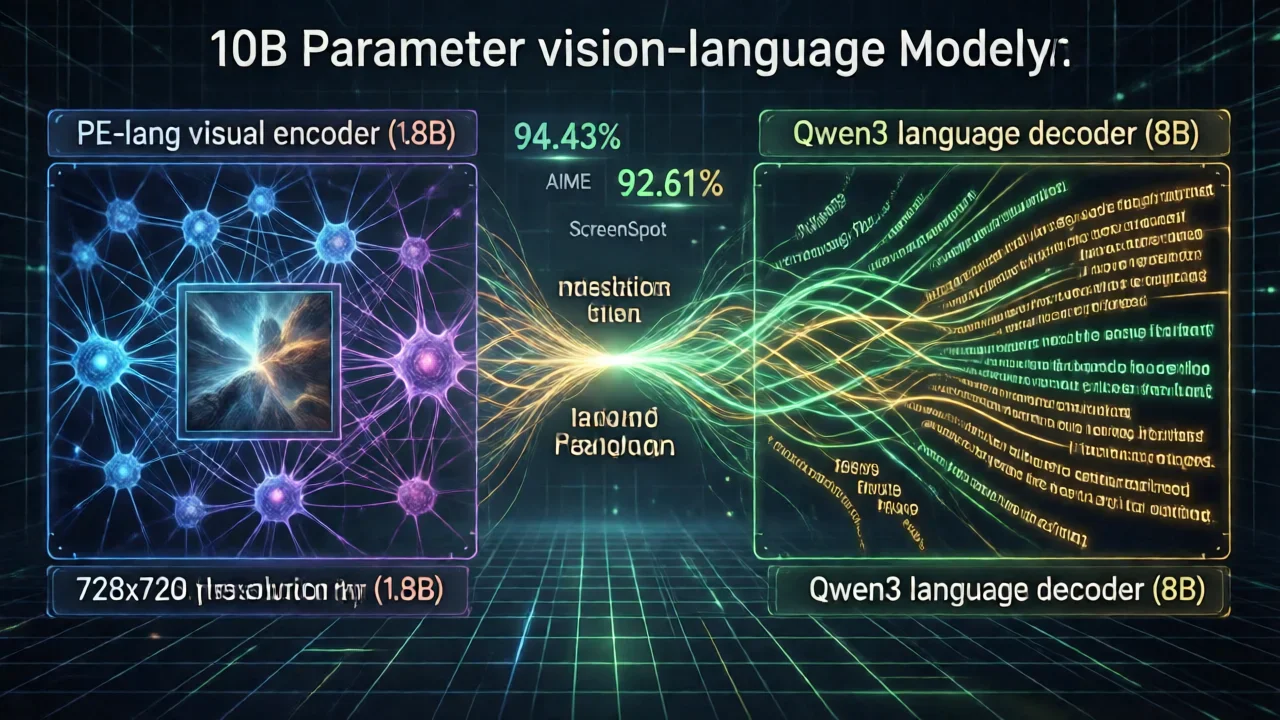

Stepfun AI just released Step3-VL-10B in January 2026. It's a 10-billion parameter vision-language model that does something unusual—it performs as well as models 10 to 20 times larger. The secret is combining a 1.8B PE-lang visual encoder with an 8B Qwen3 language decoder. If you need a vision-language model for STEM reasoning, document understanding, or GUI interaction, this one's worth a close look.

What Makes Step3-VL-10B Revolutionary?

What makes Step3-VL-10B different? Instead of just throwing more parameters at the problem, Stepfun AI designed a smarter architecture. They focused on getting more performance out of each parameter through better training and architecture choices.

The PE-lang Advantage

The key innovation is PE-lang (Language-Optimized Perception Encoder)—a 1.8B visual encoder built specifically for language-heavy tasks. Most vision encoders focus on extracting visual features. PE-lang does something different: it extracts information in a way that language models can actually reason about effectively.

Key architectural innovations:

- Multi-crop resolution strategy: 728×728 global view combined with multiple 504×504 local crops

- 16× spatial downsampling: Efficient visual token compression through two stride-2 projection layers

- Language-aligned tokenization: Visual tokens optimized for seamless integration with language models

This design philosophy explains why Step3-VL-10B excels at tasks requiring deep semantic understanding—the visual encoder is trained to extract information in a format that language models can reason about most effectively.

Unified Training Pipeline

Step3-VL-10B's exceptional performance stems from a carefully orchestrated training pipeline:

Pre-training Phase:

- 1.2 trillion tokens of multimodal data

- Single-stage, fully unfrozen training strategy

- Comprehensive coverage of visual and textual domains

Supervised Fine-tuning (SFT):

- Approximately 226 billion tokens

- Two-stage approach for progressive capability development

- Focus on instruction-following and reasoning tasks

Reinforcement Learning (RL):

- Over 1,400 RL iterations combining multiple strategies

- RLVR (Reinforcement Learning from Vision-Language Rewards)

- RLHF (Reinforcement Learning from Human Feedback)

- PaCoRe (Parallel Coordinated Reasoning) training

This multi-stage approach ensures the model develops robust reasoning capabilities while maintaining visual understanding accuracy.

Performance Benchmarks: Step3-VL-10B vs. Larger Models

The most compelling evidence of Step3-VL-10B's efficiency is its performance against significantly larger competitors.

STEM Reasoning Excellence

Step3-VL-10B demonstrates exceptional performance on mathematics and physics benchmarks:

| Benchmark | Step3-VL-10B | Larger Models | Advantage |

|---|---|---|---|

| AIME 2025 | 94.43% (PaCoRe) | ~85-90% | +4-9% |

| HMMT 2025 | 92.14% (PaCoRe) | ~80-85% | +7-12% |

| MathVision | 75.95% (PaCoRe) | ~65-70% | +6-11% |

| OCRBench | 89.00% | ~80-85% | +4-9% |

These results are particularly impressive considering Step3-VL-10B achieves them with 10-20× fewer parameters than competing models.

General Vision-Language Understanding

Beyond STEM reasoning, Step3-VL-10B maintains competitive performance across diverse benchmarks:

| Benchmark | Step3-VL-10B | Category |

|---|---|---|

| MMMU | 78.11% | Multimodal reasoning |

| MMBench (EN) | 92.05% | General visual understanding |

| MathVista | 83.97% | Mathematical visual reasoning |

| ScreenSpot-V2 | 92.61% | GUI understanding |

The ScreenSpot-V2 score is particularly noteworthy—92.61% demonstrates Step3-VL-10B's capability for understanding and interacting with user interfaces, making it valuable for automation and accessibility applications.

The PaCoRe Advantage

Many of Step3-VL-10B's top scores utilize PaCoRe (Parallel Coordinated Reasoning), an inference-time technique that aggregates 16 parallel reasoning rollouts. This approach:

- Enhances reasoning accuracy without retraining

- Increases inference cost proportionally to the number of rollouts

- Provides a tunable performance-efficiency tradeoff

- Particularly effective for complex reasoning tasks

For applications where accuracy is paramount, PaCoRe mode offers significant performance gains. For latency-sensitive applications, standard inference mode provides excellent performance with lower computational overhead.

Technical Specifications and Hardware Requirements

Understanding Step3-VL-10B's technical requirements is essential for deployment planning.

Model Architecture Details

| Component | Specification |

|---|---|

| Total Parameters | 10 billion |

| Visual Encoder (PE-lang) | 1.8 billion parameters |

| Language Decoder (Qwen3) | 8 billion parameters |

| Model Weights Size | 20 GB |

| Data Type | BF16 (Brain Float 16) |

| Visual Resolution | 728×728 global + 504×504 local crops |

| Spatial Downsampling | 16× compression |

| License | Apache 2.0 |

Hardware Requirements

Minimum Configuration for Inference:

- VRAM Required: 24 GB minimum

- Recommended GPUs: RTX 4090, A100, H100

- Model Weights: 20 GB

- Runtime Overhead: ~4 GB

- Total Memory: ~24 GB

Recommended Configuration for Production:

- VRAM: 40-80 GB (for batching and PaCoRe mode)

- GPU: A100 (80GB) or H100 (80GB)

- Storage: 30 GB (model + cache)

Software Requirements:

- Python 3.10 or later

- PyTorch ≥ 2.1.0

- Transformers 4.57.0

- CUDA 11.8 or later (for GPU inference)

Inference Format

Step3-VL-10B operates exclusively in BF16 (Brain Float 16) format. This precision level:

- Maintains numerical stability for deep reasoning

- Reduces memory requirements compared to FP32

- Provides sufficient precision for vision-language tasks

- Is widely supported by modern GPUs

Quantization to INT8 or INT4 is not officially supported, though community efforts may explore this direction.

Core Capabilities and Use Cases

Step3-VL-10B excels across multiple domains, each leveraging different aspects of its architecture.

1. STEM Problem Solving

The model's exceptional STEM reasoning performance makes it ideal for:

- Mathematics tutoring: Solving and explaining complex mathematical problems

- Physics simulations: Understanding and analyzing physics diagrams

- Chemistry visualization: Interpreting molecular structures and reactions

- Engineering analysis: Understanding technical diagrams and specifications

Example use case: A student uploads a handwritten math problem. Step3-VL-10B analyzes the image, recognizes the mathematical notation, and provides step-by-step solutions.

2. Document Understanding and OCR

With 89% OCRBench performance, Step3-VL-10B handles:

- Document digitization: Converting scanned documents to structured data

- Form processing: Extracting information from forms and applications

- Receipt analysis: Understanding and categorizing receipt content

- Invoice processing: Automated invoice data extraction

The model's multi-crop resolution strategy ensures it captures both fine details (local crops) and overall document structure (global view).

3. GUI and Screen Understanding

The 92.61% ScreenSpot-V2 score demonstrates capability for:

- UI automation: Understanding and interacting with application interfaces

- Accessibility: Describing screen content for visually impaired users

- Testing automation: Identifying UI elements for automated testing

- Mobile app analysis: Understanding mobile application layouts

4. Visual Question Answering

Step3-VL-10B can answer complex questions about images:

- Scene understanding: Describing what's happening in images

- Object relationships: Understanding spatial relationships between objects

- Contextual reasoning: Inferring information not explicitly visible

- Multi-step reasoning: Answering questions requiring multiple reasoning steps

Deployment Options

Step3-VL-10B supports multiple deployment approaches, each optimized for different use cases.

Option 1: Hugging Face Transformers (Development)

For development and experimentation, use the standard Transformers library:

from transformers import AutoProcessor, AutoModelForCausalLM

model_path = "stepfun-ai/Step3-VL-10B"

processor = AutoProcessor.from_pretrained(model_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

model_path,

trust_remote_code=True,

device_map="auto",

torch_dtype="auto"

).eval()

# Prepare input

messages = [

{

"role": "user",

"content": [

{"type": "image", "url": "image_url_or_path"},

{"type": "text", "text": "What's in this image?"}

]

}

]

# Generate response

inputs = processor.apply_chat_template(

messages, add_generation_prompt=True, tokenize=True,

return_dict=True, return_tensors="pt"

).to(model.device)

generate_ids = model.generate(**inputs, max_new_tokens=1024)

response = processor.decode(generate_ids[0, inputs["input_ids"].shape[-1]:], skip_special_tokens=True)

print(response)

Advantages:

- Simple setup and experimentation

- Direct access to model internals

- Suitable for research and prototyping

Limitations:

- Single-request processing

- No built-in batching optimization

- Limited production features

Option 2: vLLM (Production API)

For production deployments requiring OpenAI-compatible APIs:

vllm serve stepfun-ai/Step3-VL-10B \

-tp 1 \

--reasoning-parser deepseek_r1 \

--enable-auto-tool-choice \

--tool-call-parser hermes \

--trust-remote-code

Advantages:

- OpenAI-compatible API

- Efficient batching and scheduling

- Support for advanced reasoning modes

- Production-ready performance

Ideal for:

- REST API services

- Batch processing

- Multi-user applications

Option 3: SGLang (High-Performance Inference)

For maximum performance and advanced features:

sglang serve \

--model-path stepfun-ai/Step3-VL-10B \

--trust-remote-code \

--port 2345 \

--reasoning-parser deepseek-r1 \

--tool-call-parser hermes

Advantages:

- Optimized inference performance

- Advanced scheduling algorithms

- Support for complex reasoning workflows

- Flexible deployment options

Ideal for:

- High-throughput applications

- Complex reasoning tasks

- Research and experimentation

Performance Optimization Strategies

To maximize Step3-VL-10B's efficiency in production:

1. Batch Processing

Process multiple requests simultaneously to improve GPU utilization:

- Batch size 4-8 for 24GB VRAM

- Batch size 16-32 for 80GB VRAM

- Monitor memory usage and adjust accordingly

2. PaCoRe Mode Tuning

Adjust the number of parallel rollouts based on requirements:

- Standard mode: 1 rollout (baseline performance)

- PaCoRe-4: 4 rollouts (moderate accuracy boost)

- PaCoRe-16: 16 rollouts (maximum accuracy)

3. Input Optimization

Optimize image inputs for efficiency:

- Resize images to appropriate resolution (728×728 or smaller)

- Use JPEG compression for storage efficiency

- Batch similar-sized images together

4. Caching Strategies

Implement caching for repeated queries:

- Cache model outputs for identical inputs

- Use KV-cache optimization for sequential reasoning

- Implement LRU cache for memory efficiency

Comparison with Alternative Vision-Language Models

To understand Step3-VL-10B's position in the landscape:

vs. GPT-4V (Closed-source)

Step3-VL-10B Advantages:

- Open-source and freely available

- Can be self-hosted

- Lower inference costs

- Comparable STEM reasoning performance

GPT-4V Advantages:

- Broader general knowledge

- More polished user experience

- Continuous updates and improvements

vs. Claude Vision (Closed-source)

Step3-VL-10B Advantages:

- Open-source deployment

- Specialized STEM reasoning

- Lower latency for self-hosted deployment

Claude Vision Advantages:

- Broader reasoning capabilities

- Better at nuanced understanding

- Integrated with Claude ecosystem

vs. Open-source Alternatives (LLaVA, Qwen-VL)

Step3-VL-10B Advantages:

- Superior STEM reasoning performance

- Better OCR and document understanding

- More efficient parameter usage

- Stronger GUI understanding

LLaVA/Qwen-VL Advantages:

- Smaller model variants available

- Broader community support

- More deployment examples

Getting Started with Step3-VL-10B

Step 1: Environment Setup

# Create virtual environment

python -m venv step3_env

source step3_env/bin/activate

# Install dependencies

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

pip install transformers>=4.57.0

pip install pillow requests

Step 2: Download Model

# Using Hugging Face CLI

huggingface-cli download stepfun-ai/Step3-VL-10B --local-dir ./step3-vl-10b

Step 3: Run Inference

from transformers import AutoProcessor, AutoModelForCausalLM

from PIL import Image

import requests

# Load model

model_path = "./step3-vl-10b"

processor = AutoProcessor.from_pretrained(model_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

model_path,

trust_remote_code=True,

device_map="auto",

torch_dtype="auto"

).eval()

# Load image

image = Image.open("path/to/image.jpg")

# Prepare input

messages = [

{

"role": "user",

"content": [

{"type": "image", "image": image},

{"type": "text", "text": "Analyze this image in detail."}

]

}

]

# Generate response

inputs = processor.apply_chat_template(

messages, add_generation_prompt=True, tokenize=True,

return_dict=True, return_tensors="pt"

).to(model.device)

with torch.no_grad():

generate_ids = model.generate(**inputs, max_new_tokens=2048)

response = processor.decode(generate_ids[0, inputs["input_ids"].shape[-1]:], skip_special_tokens=True)

print(response)

Limitations and Considerations

While Step3-VL-10B is impressive, understanding its limitations is important:

1. Inference Latency

- Requires 24GB VRAM minimum

- Inference time: 5-15 seconds per image (depending on complexity)

- PaCoRe mode increases latency proportionally

2. Knowledge Cutoff

- Training data cutoff: Early 2026

- May lack information about very recent events

- Requires fine-tuning for domain-specific knowledge

3. Language Support

- Primarily optimized for English and Chinese

- Other languages supported but with lower performance

- Multilingual reasoning may be less robust

4. Specialized Tasks

- Not optimized for real-time video processing

- Limited support for audio-visual reasoning

- May struggle with highly specialized domains without fine-tuning

Future Developments and Roadmap

The vision-language model landscape continues to evolve rapidly. Potential future developments for Step3-VL-10B include:

- Quantized variants: INT8 and INT4 versions for edge deployment

- Smaller models: 3B and 5B parameter variants for resource-constrained environments

- Multimodal extensions: Integration with audio and video understanding

- Fine-tuned variants: Domain-specific versions for specialized applications

- Improved efficiency: Further optimization of the PE-lang architecture

Conclusion

Step3-VL-10B represents a significant achievement in efficient vision-language model design. By combining innovative architecture (PE-lang encoder), sophisticated training strategies (multi-stage pipeline with RL), and careful parameter allocation (1.8B + 8B split), Stepfun AI has created a model that delivers exceptional performance while remaining practical for self-hosted deployment.

Whether you're building STEM tutoring systems, document processing pipelines, or GUI automation tools, Step3-VL-10B offers a compelling combination of capability, efficiency, and accessibility. The model's open-source Apache 2.0 license ensures you can deploy it freely in both research and commercial applications.

The era of efficient, capable vision-language models is here. Step3-VL-10B is leading the charge.

Resources: